Activation functions in machine learning

Why do you need an activation function in inference, ML or ANN

Despite of the huge amount of blogs, on-line courses, video and technical articles describing the various types of activation functions you can use to predict something (inference), you may found (like me) difficult at first to understand what activation functions are and why you absolutely need them in practice when you approach machine learning, statistical inference and artificial neural network. And the most fascinating part of all of this is that they are in our brain. In this article, i will try and hope to explain simply and shortly what activation functions are.

Linear relation between data

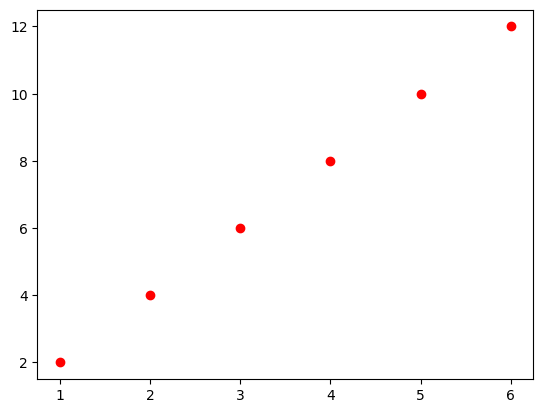

Inference means to predict something (Y or dipendend value) from 1 or more data or features (X,…Xn or indipendent values). The simpliest and most basic problem can be expressed as the following math expression:

Y = w*x

where “Y” is what you want to predict, “x” is the indipendent variable and “w” is what you have to learn. This type of problem could be represented as a linear relation between “x” and “Y”.

“w” is 2 … easy to predict …

Non-linear relation between data

A “perfect” linear relation between “x” and “Y” cannot represent a real problem (otherwise it would not be a problem and you would not have to predict anything…). The image below show instead a type of relation we could observe in a real problem of “YES/NOT” (binary relation).

Try to solve this problem with a “linear inference” (orange line) will fail as shown below.

The sigmoid activation function

What happen if we apply a Sigmoid activation function to our “x” ? (green line)

Activation functions in machine learning was originally published in Artificial Intelligence in Plain English on Medium, where people are continuing the conversation by highlighting and responding to this story.

https://ai.plainenglish.io/activation-functions-in-machine-learning-99d469b14746?source=rss—-78d064101951—4

By: Immagi

Title: Activation functions in machine learning

Sourced From: ai.plainenglish.io/activation-functions-in-machine-learning-99d469b14746?source=rss—-78d064101951—4

Published Date: Sun, 11 Jun 2023 13:36:19 GMT

Did you miss our previous article…

https://e-bookreadercomparison.com/build-and-deploy-object-detection-using-yolov5-fastapi-and-docker/